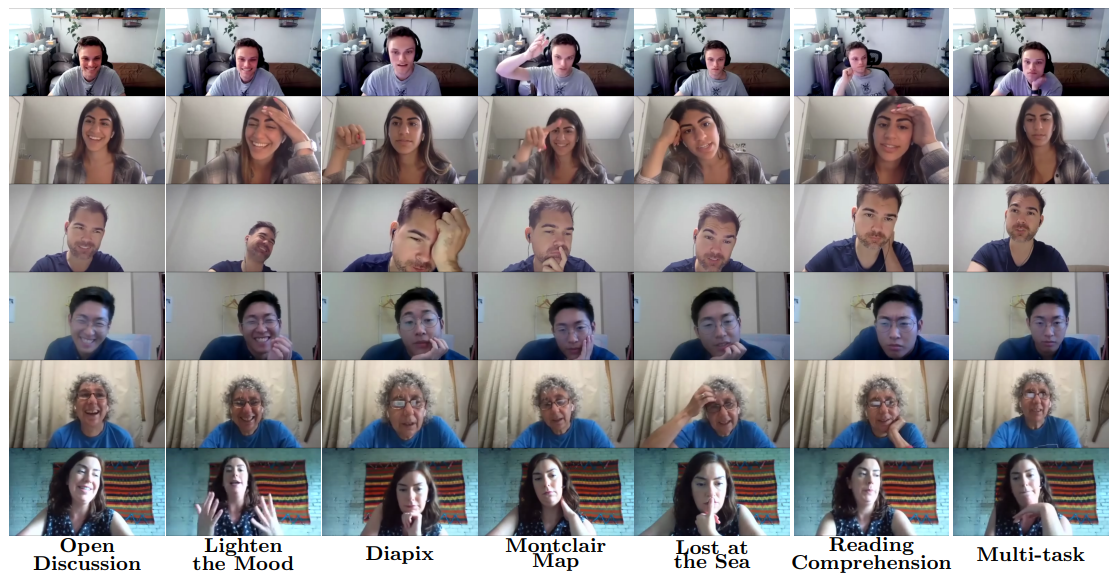

AVCAffe: A Large Scale Audio-Visual Dataset of Cognitive Load and Affect for Remote Work

AAAI 2023

|

|

|

|

|

|

|

| Attribute | Details |

|---|---|

| Total participants | 106 |

| Gender stats. | Male: 52 or 49% Female: 53 or 50% Non-Binary: 1 or 0.01% |

| Age stats. | 18 to 20: 8 21 to 30: 75 31 to 40: 17 41 to 50: 2 51 to 60: 4 |

| Country of origins | Bangladesh, Brazil, Canada, China, Ecuador, Egypt, Germany, Hong Kong, India, Iran, Ireland, Jordan, Mexico, Nigeria, Pakistan, Sweden, USA, Vietnam |

| Total hours | 108 Hours |

| Total clips | |

| Total frames | 6167022 or 6.2M |

| Modalities | Audio, Video |

| Ground truths | Arousal, Valence, Mental Demand, Temporal Demand, Effort, Physical Demand, Frustration, Performance |

@misc{sarkar2022avcaffe,

title={AVCAffe: A Large Scale Audio-Visual Dataset of Cognitive Load and Affect for Remote Work},

author={Pritam Sarkar and Aaron Posen and Ali Etemad},

year={2022},

eprint={2205.06887},

archivePrefix={arXiv},

primaryClass={cs.HC}} |